If you sample that distribution, you’ll produce a million pixel values. The probability distribution over such images will be some complex million-plus-one-dimensional surface. Only now, plotting each image requires not two axes, but a million. The same analysis holds for more realistic grayscale photographs with, say, a million pixels each. All you need to do is randomly generate new data points while adhering to the restriction that you generate more probable data more often - a process called “sampling” the distribution. Now you can use this probability distribution to generate new images. You’re most likely to find individual data points underneath the highest part of the surface, and few where the surface is lowest. This surface maps out a probability distribution. Now imagine a surface above the plane, where the height of the surface corresponds to how dense the clusters are. If we plot multiple images as points, clusters may emerge - certain images and their corresponding pixel values that occur more frequently than others. You can use these two values to plot the image as a point in 2D space. We can fully describe this image with two values, based on each pixel’s shade (from zero being completely black to 255 being completely white). To understand how creating data works for images, let’s start with a simple image made of just two adjacent grayscale pixels.

And while the realistic-looking images created by diffusion models can sometimes perpetuate social and cultural biases, she said, “we have demonstrated that generative models are useful for downstream tasks improve the fairness of predictive AI models.” High Probabilities “This is an exciting time for generative models,” said Anima Anandkumar, a computer scientist at the California Institute of Technology and senior director of machine learning research at Nvidia.

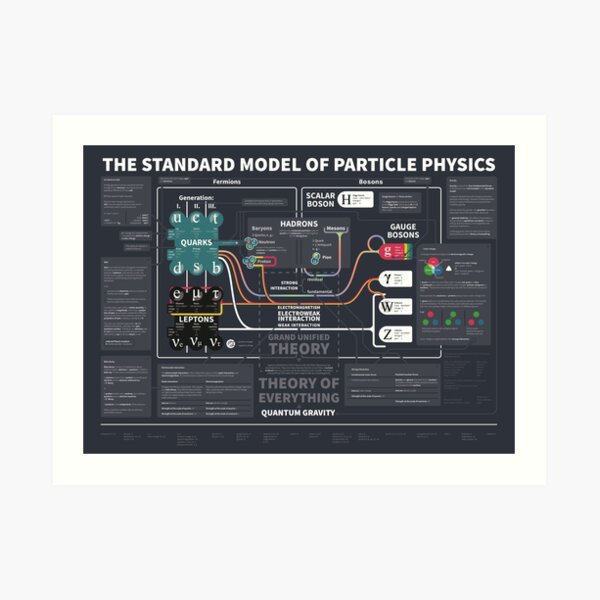

The power of these models has rocked industry and users alike. “There are a lot of techniques that were initially invented by physicists and now are very important in machine learning,” said Yang Song, a machine learning researcher at OpenAI. The system that underpins them, known as a diffusion model, is heavily inspired by nonequilibrium thermodynamics, which governs phenomena like the spread of fluids and gases. The key insight that makes DALL♾ 2’s images possible - as well as those of its competitors Stable Diffusion and Imagen - comes from the world of physics. Meanwhile, a powerful generative model - created by a postdoctoral researcher with a passion for physics - lay dormant, until two graduate students made technical breakthroughs that brought the beast to life.ĭALL♾ 2 is such a beast. But even as the quality of their images got better, the models proved unreliable and hard to train. The first important generative models for images used an approach to artificial intelligence called a neural network - a program composed of many layers of computational units called artificial neurons. This is one of the hardest problems in machine learning, and getting to this point has been a difficult journey. Yet DALL♾ 2 can assemble the concepts into something that might have made Dalí proud.ĭALL♾ 2 is a type of generative model - a system that attempts to use training data to generate something new that’s comparable to the data in terms of quality and variety. The program would have encountered images of beaches, goldfish and Coca-Cola during training, but it’s highly unlikely it would have seen one in which all three came together. Ask DALL♾ 2, an image generation system created by OpenAI, to paint a picture of “goldfish slurping Coca-Cola on a beach,” and it will spit out surreal images of exactly that.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed